Hello everyone, and welcome to the first instalment in a new weblog dedicated solely to documenting a new DIY supercomputer project: the aptly named Grovercomp. Today I will explain what the goal is with Grovercomp, why I’m building it, and what to expect from this weblog moving forward.

Background

I am a computer programmer by trade, and a computer scientist by profession. So is my close colleague and partner-in-crime Charles Rosenbauer, and together we have been collaborating on various projects we share a sense of value in almost since we’ve known each other.

An abandoned server

For the longest time I used to work as an unarmed security guard, stationed at all sorts of industrial outposts around the Research Triangle as a literal watchman. (For the record, no, nobody watches the watchmen. I checked.) One of my last contracts was supervising an old decommissioned factory, and some of the junk they were having contractors throw away was a lot of dated server equipment, namely 10Gb and 40Gb managed Ethernet switches and a 24 TB storage server, which I came into possession of for a gracious $0. It’s amazing what people will throw away.

Gathering the Infinity Stones

Anyway, I saw a lot of utility in having these things, and in counting my blessings to find a relative lack of pressure to get a time-sink type of job, a gigabit fibre home internet connection, and a drive to work with Charles on things we really believe could change the face of computing forever, I decided to start looking into ways to get a hold of the only digital resource I lacked in spades for cheap: compute power.

Enter Xeon Phi

Very quickly I gravitated towards the now-defunct Xeon Phi computing platform, as I was just floored at how cheap some of these cards had become on sites like schmeBay. I bought two of them, and then four more, and then the other night bought 14 more in one go, at an average price of $40.50/card. All of them are the same model: the Haswell contemporary Knight’s Corner architecture, featuring 60 cores and 8 GiB of on-board RAM each; this is the SKU called the 5110P. Once again, it’s amazing what people will (nearly) throw away. Charles and I know that it’s an artefact of poor software that these things are so worthless to everyone else, and that’s exactly why they’re perfect for us.

The build plan

If you kept count, you know that I bought twenty cards in total. Two of them will be reserved each for Charles and I in personal rigs, while the other 18 will be deployed in triads among six compute nodes, which collectively make up the Grovercomp compute cluster. These nodes will be named after the first six letters of the Greek alphabet: Alpha, Beta, Gamma, Delta, Epsilon, and Zeta.

Specs & deets

While I have not nailed down specific model choices yet, as prices and stock fluctuate wildly, the general plan is to get something with these minimum specs for each of the six nodes:

A dual-socket LGA 2011-3 motherboard with at least three double-wide PCIe slots for the coprocessors

High core count Xeon E5 CPUs in both sockets, either v3 (22nm Haswell) or better yet v4 (14nm Broadwell)

8 GiB DDR4 ECC DIMMs ×8, simply to get quad channel memory with both sockets

At least two 1kW+ Supermicro redundant PSUs

No over-engineering

That’s kind of it for the meat of things, really! There will be a fair amount of sysadmin work to be done once they are built, and I have no intention of over-engineering any of it. Will probably go with CentOS and harness resources together myself as needed. It’s actually a saner path when you write key code yourself already.

Utility

First of all, my napkin math shows me that at full load, this cluster can draw over 4 kilowatts of mains power. It goes without saying that I’m going to have to run lines to a few more additional breakers in my main service panel, and while I’m at it I’ll go ahead and add another one for my window air conditioner that’ll sit next to it, and maybe another for the rest of it all. Over-provisioning is a good thing, right?

It’s up to us

Okay, but more concretely, there are several things we can put this compute cluster to good use for. Odds are good that most, if not all, of these things are tasks only Charles and I have the cause to program in the way necessary to fully leverage the highly parallel and vectorised nature of the Xeon Phis. But that’s okay.

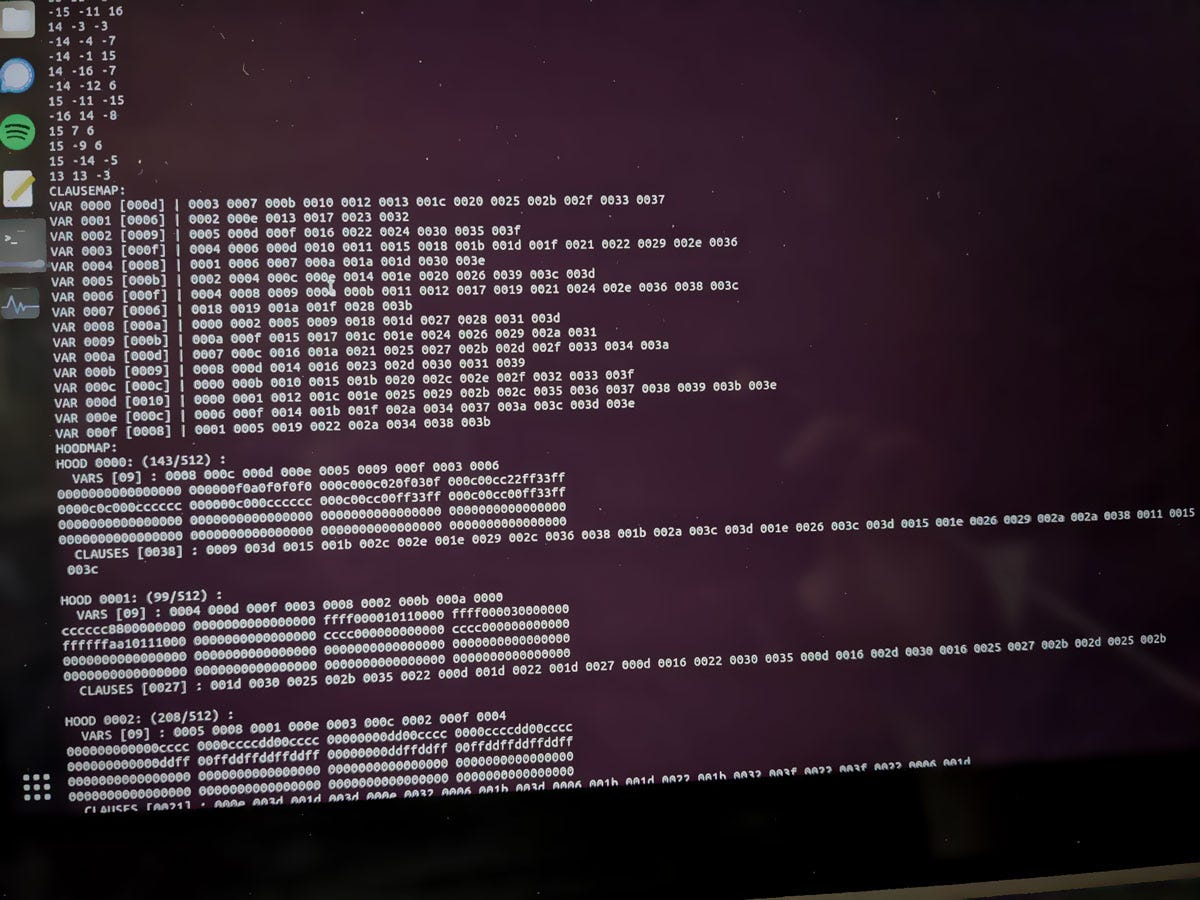

Einstein-level research

One of the research subjects Charles has been putting a lot of focus into lately is the SAT solver. Here is a screenshot he took of some preliminary results of a SAT instance, showing a 1024-bit lattice containing every possible arrangement of 10 bits:

Data science

Parallelisation is the pinnacle of scalable high-performance computing, and having this cluster enables us to experiment with algorithms at a scale normally unavailable to un-monied people like us. This system is just powerful enough and just complex enough (with 10Gb backplanes) that we can faithfully extrapolate performance factors for much, much larger compute clusters, up to even the world’s most powerful supercomputers, without taking gross liberties of interpretation of our data.

Software debugging and tooling

Even as just a single card in a personal rig, these Xeon Phi coprocessors also provide a fertile breeding ground for exploring new frontiers of debugging and software development tooling that, in Charles’ view, is woefully neglected. This is also a considerable portion of my interest in the Grovercomp: to create a workable software development toolset, principally anchored by a kernel (Hinterlib), an editor (Quindle), and an assembler-compiler (Oración/Gordian/FCC/Sirius) as the pillars of the toolset. Testing these on old dogs like my Compaq i486 is as crucial to their quality as testing them on machines that are as close as we may ever get to the state of the art.

This weblog

This place will serve as a kind-of-live feed of every major physical development activity that goes into the building of the Grovercomp, in the same fashion of the classic Groverhaus to which it owes its name and logo.

When it’s done, it’s done (mostly)

This publication will be done actively updating once the server is completely finished and operational, save for some potential occasional updates about outages, upgrades and anything else that may happen to it over its lifetime.

No paywall here

While I will not be paywalling any content here (this is Grovercomp, after all, so posterity is king), I do highly recommend giving my main newsletter a look-see if my content consistently interests you. The bulk of my writings there also revolve around computer science.

This is why we can’t have nice things

One of the biggest reasons I’m building such a strangely-architected computer is because, in a financial sense, I am quite poor, and cannot afford nice things like most people in tech. I’m actually due to ship back the M1 Max MBP I’m typing this up on back to my former employer, and it cost twice as much as this whole project will. I’m very gratefully taking advantage of a lot of non-material and non-monetary wealth I have earned in adulthood: my household, my family of roommates, and the collaborative effort we all take to make this house work so well in a way we could never do alone. Grovercomp is therefore my crazy pet project, and I will end up paying for it by selling kittens and donating plasma, if my strategy is right. Supporting me on Substack really helps.

Hold up, you said petascale!

I did, and I should be fair and say that our definition of “petascale” for this computer is in the context of 1-bit vector ops, not floating-point as is typical for measurements of compute power. Xeon Phi coprocessors have four-way hyperthreading which gives them four “hardware threads” per physical core, of which each card has 60. That totals out to 4,320 hardware threads from 1,080 cores, not counting all of the regular old Xeon cores and threads, of which we’re not sure how many cores there will be (we’re sure there will be 12 sockets filled though).

Thanks for reading and welcome to the Grovercomp weblog!